In a hideous reflection of China’s already-prevalent ‘Social Credit’ system – which is a rating assigned to each citizen based on government data regarding their economic and social status – The Washington Post reports that Facebook has begun to assign its users a reputation score, predicting their trustworthiness on a scale from zero to one.

Under the guise of its effort to combat ‘fake news’, WaPo notes (citing an interview with Tessa Lyons, the product manager who is in charge of fighting misinformation) that the previously unreported ratings system, which Facebook has developed over the last year, has evolved to include measuring the credibility of users to help identify malicious actors.

Users’ trustworthiness score between zero and one isn’t meant to be an absolute indicator of a person’s credibility, Lyons told the publication, nor is there is a single unified reputation score that users are assigned.

“One of the signals we use is how people interact with articles,” Lyons said in a follow-up email.

For example, if someone previously gave us feedback that an article was false and the article was confirmed false by a fact-checker, then we might weight that person’s future false news feedback more than someone who indiscriminately provides false news feedback on lots of articles, including ones that end up being rated as true.”

The score is one measurement among thousands of behavioral clues that Facebook now takes into account as it seeks to understand risk.

I like to make the joke that, if people only reported things that were [actually] false, this job would be so easy!” said Lyons in the interview. “People often report things that they just disagree with.”

But how these new credibility systems work is highly opaque.

Not knowing how [Facebook is] judging us is what makes us uncomfortable,” said Claire Wardle, director of First Draft, research lab within Harvard Kennedy School that focuses on the impact of misinformation and is a fact-checking partner of Facebook, of the efforts to assess people’s credibility.

But the irony is that they can’t tell us how they are judging us – because if they do the algorithms that they built will be gamed.”

This all sounds ominously like the Orwellian China ‘social credit’ system, which in addition to blocking the flights and trains, the Global Times noted that the names of those with low social credit scores had been shamed on a public list, said Meng Wei, spokeswoman for the National Development and Reform Commission, via news website chinanews.com.

Those on the list could be denied loans, grants, and other forms of government assistance, Wei added.

“Hou’s phrase that the ‘discredited people become bankrupt’ makes the point, but is an oversimplification,” Zhi Zhenfeng, a legal expert at the Chinese Academy of Social Sciences in Beijing, told the Global Times.

How the person is restricted in terms of public services or business opportunities should be in accordance with how and to what extent he or she lost his credibility.

The punishment should match the deed.

Discredited people deserve legal consequences.

This is definitely a step in the right direction to building a society with credibility.”

Many observers have likened China’s ‘social credit’ system to that shown in Netflix’s Black Mirror episode ‘Nosedive’ in which a world where people can rate each other from one to five stars for every interaction they have.

It seems Silicon Valley’s leaders saw the same episode…

Coming to America soon…

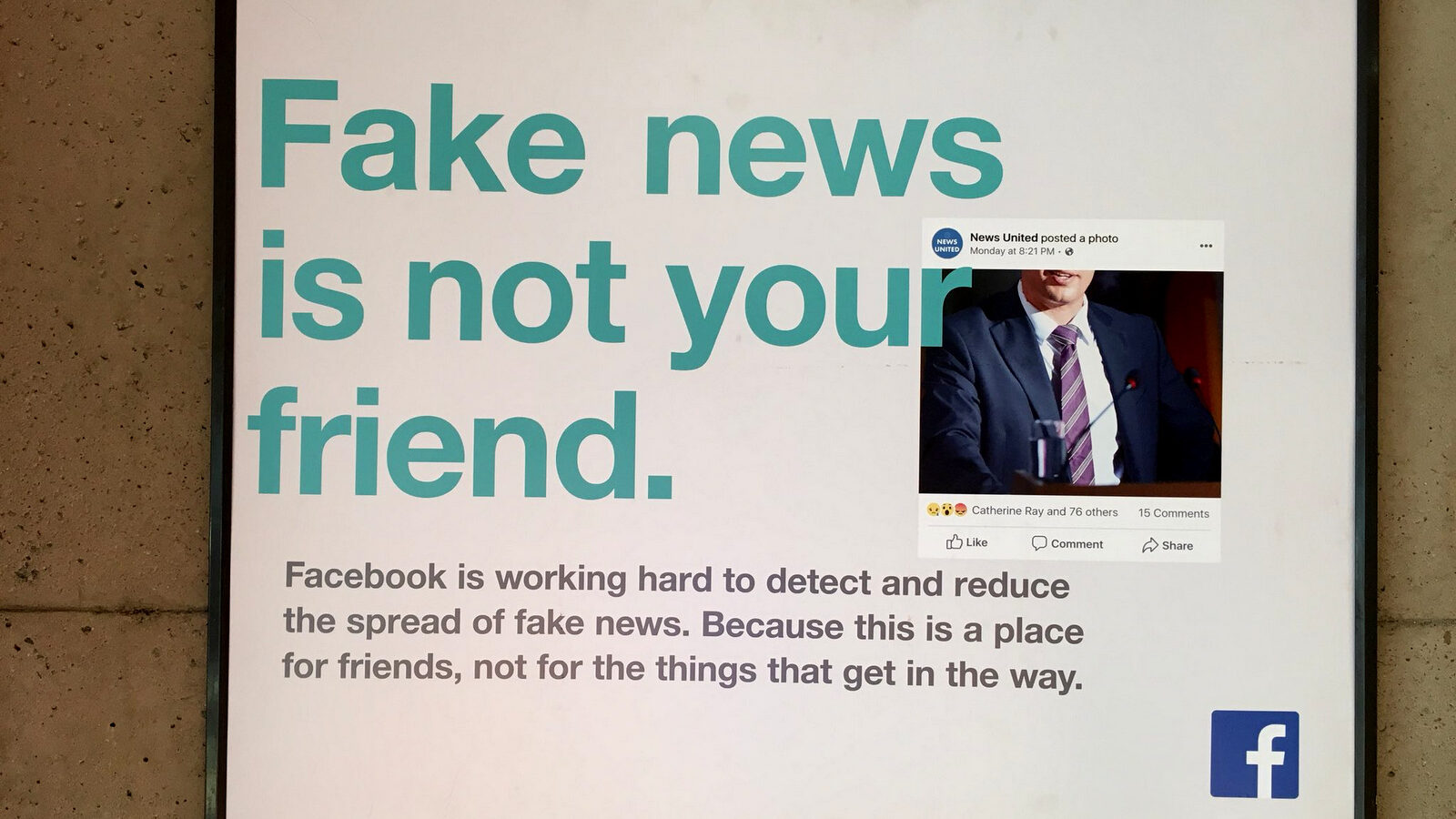

Top Photo | A Facebook ad spotted in the DC Metro by Twitter user @unsuckdcmetro